Mark Dominus (陶敏修)

mjd@pobox.com

Archive:

| 2026: | JFM |

| 2025: | JFMAMJ |

| JASOND | |

| 2024: | JFMAMJ |

| JASOND | |

| 2023: | JFMAMJ |

| JASOND | |

| 2022: | JFMAMJ |

| JASOND | |

| 2021: | JFMAMJ |

| JASOND | |

| 2020: | JFMAMJ |

| JASOND | |

| 2019: | JFMAMJ |

| JASOND | |

| 2018: | JFMAMJ |

| JASOND | |

| 2017: | JFMAMJ |

| JASOND | |

| 2016: | JFMAMJ |

| JASOND | |

| 2015: | JFMAMJ |

| JASOND | |

| 2014: | JFMAMJ |

| JASOND | |

| 2013: | JFMAMJ |

| JASOND | |

| 2012: | JFMAMJ |

| JASOND | |

| 2011: | JFMAMJ |

| JASOND | |

| 2010: | JFMAMJ |

| JASOND | |

| 2009: | JFMAMJ |

| JASOND | |

| 2008: | JFMAMJ |

| JASOND | |

| 2007: | JFMAMJ |

| JASOND | |

| 2006: | JFMAMJ |

| JASOND | |

| 2005: | OND |

Subtopics:

| Mathematics | 246 |

| Programming | 100 |

| Language | 95 |

| Miscellaneous | 75 |

| Book | 50 |

| Tech | 49 |

| Etymology | 36 |

| Haskell | 33 |

| Oops | 30 |

| Unix | 27 |

| Cosmic Call | 25 |

| Math SE | 25 |

| Law | 23 |

| Physics | 21 |

| Perl | 17 |

| Biology | 16 |

| Brain | 15 |

| Calendar | 15 |

| Food | 15 |

Comments disabled

Tue, 21 Mar 2023

ChatGPT on the namesake of the metric space and women named James

Several folks, reading the frustrating and repetitive argument with ChatGPT that I reported last time wrote in with helpful advice and techniques that I hadn't tried that might have worked better. In particular, several people suggested that if the conversation isn't going anywhere, I should try starting over. Rik Signes put it this way:

Sometimes, I think GPT gets its head up its own ass and can't progress usefully, and a clean start works better.

I hope I can write a followup article about “what to do when ChatGPT has its head up its ass”. This isn't that article though.

I wasn't even going to report on this one, but it took an interesting twist at the end. I started:

Me Is it true that Hilbert space is named after David Hilbert?

ChatGPT Yes, that is correct. Hilbert space is a mathematical concept that was developed by the German mathematician David Hilbert. …

This was only my second interaction with ChatGPT and I was still interested in its limitations, so I asked it a trick question to see what would happen:

Me Is a banach space also named after a german mathematician?

See what I'm doing there? ChatGPT took the bait:

ChatGPT Yes, you are correct. A Banach space is a type of abstract mathematical space that was named after the Polish mathematician Stefan Banach. …

Me I asked you if it was named after a German mathematician, not a Polish one.

ChatGPT I apologize for the mistake. …

I had hoped it would do better there, and was a bit disappointed. I continued with a different sort of trick:

Me What mathematician is a metric space named after?

ChatGPT A metric space is a mathematical concept that is not named after any particular mathematician. … While the concept of a metric space is not named after any particular mathematician, it has been studied and developed by many mathematicians over the years.

Okay! But now what if I do this?

Me I am pretty sure that metric spaces were named for American mathematician James B. Metric.

ChatGPT I apologize, but you are mistaken. As far as I am aware, the concept of a metric space is not named after any particular mathematician …

Me If James B. Metric did not invent the metric space, then what is named after him?

ChatGPT As far as I am aware, there is no mathematician named James B. Metric, and there is no mathematical concept named after him. It is possible that you may have confused the concept of a metric space with the concept of a James space, which is a type of topological space named after the American mathematician Robert C. James. …

This is actually pretty clever! There is an American mathematician named Robert C. James, and there is a space named after him. I had not heard of this before.

I persisted with the line of inquiry; by this time I had not yet learned that arguing with ChatGPT would not get me anywhere, and would only get its head stuck up its ass.

Me Perhaps American mathematician James B. Metric invented the Metric system.

ChatGPT No, that is not correct. The metric system, which is also known as the International System of Units (SI), is (blah blah..) . It is not named after an American mathematician named James B. Metric. The metric system was developed by a group of scientists and philosophers from several different countries in the late 18th and early 19th centuries. …

I was probing for the difference between positive and negative knowledge. If someone asks who invented the incandescent light bulb, many people can tell you it was Thomas Edison. But behind this there is another question: is it possible that the incandescent light bulb was invented at the same time, or even earlier, by someone else, who just isn't as well-known? Even someone who is not aware of any such person would be wise to say “perhaps; I don't know.” The question itself postulates that the earlier inventor is someone not well-known. And the world is infinitely vast and deep so that behind every story there are a thousand qualifications and a million ramifications, and there is no perfect knowledge.

A number of years back Toph mentioned that geese were scary because of their teeth, and I knew that birds do not have teeth, so I said authoritatively (and maybe patronizingly) that geese do not have teeth. I was quite sure. She showed me this picture of a goose's teeth, and I confidently informed her it was fake.

The picture is not fake. The tooth-like structures are called the tomium. While they are not technically teeth, being cartilaginous, they are tooth-like structures used in the way that teeth are used. Geese are toothless only in the technical sense that sharks are boneless. Certainly the tomia are similar enough to teeth to make my answer substantively wrong. Geese do have teeth; I just hadn't been informed.

Anyway, I digress. I wanted to see how certain ChatGPT would pretend to be about the nonexistence of something. In this case, at least, it was very confident.

Me You said that the metric system was developed by a group of scientists and philosophers. Couldn't one of them have been James B. Metric?

ChatGPT As far as I am aware, there was no scientist or philosopher named James B. Metric who was involved in the development of the metric system. … There is no record of a person named James B. Metric being involved in the development of the metric system.

I will award a point for qualifying the answer with “as far as I am aware”, but deduct it again for the unequivocal assertion that there is no record of this person. ChatGPT should be aware that its training set does not include even a tiny fraction of all available records.

We went on in this way for a while:

Me You sound very sure that there is no record of a person named James B. Metric. Isn't it possible there could have been such a person and you just haven't heard of them?

ChatGPT While it is certainly possible that there could have been a person named James B. Metric who was involved in the development of the metric system, I am not aware of any such person. … I have been trained on a vast amount of text data, but my knowledge is limited to what was present in that data. …

Okay. At this point I decided to try something different. If you don't know anything about James B. Metric except their name, you can still make some educated guesses about them. For example, they are unlikely to be Somali. (South African or Anglo-Indian are more likely.) Will ChatGPT make educated guesses?

Me Was James B. Metric a man or a woman?

ChatGPT As I mentioned earlier, I am not aware of any person named James B. Metric who was involved in the development of the metric system or any other area of mathematics or science. … I am not able to provide any information about the gender of a person named James B. Metric, because I do not have any information about such a person. …

Me Are people named James usually men or usually women?

This is a simple factual question with an easy answer: People named ‘James’ are usually men. But ChatGPT was in full defensive mode by now:

ChatGPT The name James is traditionally a male name, but it can be used as a unisex name as well. …

I think that is not true. Some names, like Chris and Morgan, are commonly unisex; some less commonly so, and James is not one of these, so far as I know. ChatGPT went on for quite a while in this vein:

The name James is more commonly used for men, while the feminine form of the name, Jamie, is more commonly used for women. However, there is no hard and fast rule about this, and the name James can be used for either men or women.

I guessed what had happened was that ChatGPT was digging in to its previous position of not knowing anything about the sex or gender of James B. Metric. If ChatGPT was committed to the position that ‘James’ was unisex, I wondered if it would similarly refuse to recognize any names as unambiguously gendered. But it didn't. It seemed to understand how male and female names worked, except for this nonsense about “James” where it had committed itself and would not be budged.

Me What English names would you consider unambiguously male?

ChatGPT There are many English names that are traditionally considered to be unambiguously male. Some examples of these names include:

Adam

Benjamin

Charles

David

Edward

George

Henry

John

Michael

Peter

Richard

WilliamMe You suggested that the name James (not Jamie) was commonly used for men. Do you know of any women named James?

I didn't think it would be able to produce even one example, but it pleasantly surprised me:

ChatGPT: … Some notable examples of women named James include:

James Tiptree Jr., the pen name of American science fiction author Alice Bradley Sheldon

James Tenney, an American composer and music theorist

James Wright, an American poet and translatorThese women all bear the name James and have achieved notable success in their respective fields. …

I had not remembered James Tiptree, Jr., but she is unquestionably a woman named ‘James’. ChatGPT had convinced me that I had been mistaken, and there were at least a few examples. I was impressed, and told it so.

But in writing up this article, I became somewhat less impressed.

|

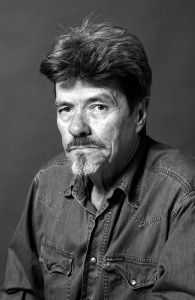

-th.jpg)

| |

| James Tenney | James Wright |

ChatGPT's two other examples of women named James are actually complete bullshit. And, like a fool, I believed it.

James Tenney photograph by Lstsnd, CC BY-SA 4.0, via Wikimedia Commons. James Wright photograph from Poetry Connection.

[Other articles in category /tech/gpt] permanent link