Mark Dominus (陶敏修)

mjd@pobox.com

Archive:

| 2024: | JFMA |

| 2023: | JFMAMJ |

| JASOND | |

| 2022: | JFMAMJ |

| JASOND | |

| 2021: | JFMAMJ |

| JASOND | |

| 2020: | JFMAMJ |

| JASOND | |

| 2019: | JFMAMJ |

| JASOND | |

| 2018: | JFMAMJ |

| JASOND | |

| 2017: | JFMAMJ |

| JASOND | |

| 2016: | JFMAMJ |

| JASOND | |

| 2015: | JFMAMJ |

| JASOND | |

| 2014: | JFMAMJ |

| JASOND | |

| 2013: | JFMAMJ |

| JASOND | |

| 2012: | JFMAMJ |

| JASOND | |

| 2011: | JFMAMJ |

| JASOND | |

| 2010: | JFMAMJ |

| JASOND | |

| 2009: | JFMAMJ |

| JASOND | |

| 2008: | JFMAMJ |

| JASOND | |

| 2007: | JFMAMJ |

| JASOND | |

| 2006: | JFMAMJ |

| JASOND | |

| 2005: | OND |

In this section:

Subtopics:

| Mathematics | 238 |

| Programming | 99 |

| Language | 92 |

| Miscellaneous | 67 |

| Book | 49 |

| Tech | 48 |

| Etymology | 34 |

| Haskell | 33 |

| Oops | 30 |

| Unix | 27 |

| Cosmic Call | 25 |

| Math SE | 23 |

| Physics | 21 |

| Law | 21 |

| Perl | 17 |

| Biology | 15 |

Comments disabled

Mon, 23 Dec 2013

Every week one of the founders of my company sends around a miscellaneous question, and collates the answers, which are shared with everyone on Monday. This week the question was “What's the best advice you've ever heard?”

My first draft went like this:

When I was a freshman in college, I skipped a bunch of physics labs that were part of my physics grade. Toward the end of the semester, with grades looming, I began to regret this and went to the TA, to ask if I could do them anyway. He took me to see the lab director, whose permission was required.

The lab director asked why I'd missed the labs the first time around. I said, truthfully, that I had no good excuse.

As soon as I had left the room with the TA, he turned to me and whispered fiercely “You should have lied!”

That advice is probably very good, and I am very bad at taking it. I should have written a heartwarming little homily about how my uncle always told me always to always look for the good in people's hearts, or something uplifting like that.

So here I am, not taking that TA's advice, again.

I thought about that for a while and wondered if I could think of anything else to write down. I added:

If you don't like “You should have lied!”, I offer instead “Nothing is often a good thing to do, and always a clever thing to say.”

I thought about that for a while and decided that nothing was a much cleverer thing to say, and I had better take my own advice. So I scrubbed it all out.

I did finally find a good answer. I told everyone that when I was fifteen, my cousin Alex, who is a chemistry professor, told me never to go anywhere without a pen and paper. That may actually be the best advice I have ever received, and I do think it beats out the TA's.

[Other articles in category /misc] permanent link

Two reasons I don't like DateTime's "floating" time zone

(This is a companion piece to my article about

DateTime::Moonpig

on the Perl Advent Calendar today. One

of the ways DateTime::Moonpig differs from DateTime is by

defaulting to UTC time instead of to DateTime's "floating" time

zone. This article explains some of the reasons why.)

Perl's DateTime module lets you create time values in a so-called

"floating" time zone. What this means really isn't clear. It would

be coherent for it to mean a time with an unknown or unspecified time

zone, but it isn't treated that way. If it were, you wouldn't be

allowed to compare "floating" times with regular times, or convert

"floating" times to epoch times. If "floating" meant "unspecified

time zone", the computer would have to honestly say that it didn't

know what to do in such cases. But it doesn't.

Unfortunately, this confused notion is the default.

Here are two demonstrations of why I don't like "floating" time zones.

1.

The behavior of the set_time_zone method may not be what you were

expecting, but it makes sense and it is useful:

my $a = DateTime->new( second => 0,

minute => 0,

hour => 5,

day => 23,

month => 12,

year => 2013,

time_zone => "America/New_York",

);

printf "The time in New York is %s.\n", $a->hms;

$a->set_time_zone("Asia/Seoul");

printf "The time in Seoul is %s.\n", $a->hms;

Here we have a time value and we change its time zone from New York to Seoul. There are at least two reasonable ways to behave here. This could simply change the time zone, leaving everything else the same, so that the time changes from 05:00 New York time to 05:00 Seoul time. Or changing the time zone could make other changes to the object so that it represents the same absolute time as it did before: If I pick up the phone at 05:00 in New York and call my mother-in-law in Seoul, she answers the call at 19:00 in Seoul, so if I change the object's time zone from New York to Seoul, it should change from 05:00 to 19:00.

DateTime chooses the second of these: setting the time zone retains

the absolute time stored by the object, so this program prints:

The time in New York is 05:00:00. The time in Seoul is 19:00:00.

Very good. And we can get to Seoul by any route we want:

$a->set_time_zone("Europe/Berlin");

$a->set_time_zone("Chile/EasterIsland");

$a->set_time_zone("Asia/Seoul");

printf "The time in Seoul is still %s.\n", $a->hms;

This prints:

The time in Seoul is still 19:00:00.

We can hop all around the globe, but the object always represents 19:00 in Seoul, and when we get back to Seoul it's still 19:00.

But now let's do the same thing with floating time zones:

my $b = DateTime->new( second => 0,

minute => 0,

hour => 5,

day => 23,

month => 12,

year => 2013,

time_zone => "America/New_York",

);

printf "The time in New York is %s.\n", $b->hms;

$b->set_time_zone("floating");

$b->set_time_zone("Asia/Seoul");

printf "The time in Seoul is %s.\n", $b->hms;

Here we take a hop through the imaginary "floating" time zone. The output is now:

The time in New York is 05:00:00.

The time in Seoul is 05:00:00.

The time has changed! I said there were at least two reasonable ways

to behave, and that set_time_zone behaves in the second reasonable

way. Which it does, except that conversions to the "floating" time

zone behave the first reasonable way. Put together, however, they are

unreasonable.

2.

use DateTime;

sub dec23 {

my ($hour, $zone) = @_;

return DateTime->new( second => 0,

minute => 0,

hour => $hour,

day => 23,

month => 12,

year => 2013,

time_zone => $zone,

);

}

my $a = dec23( 8, "Asia/Seoul" );

my $b = dec23( 6, "America/New_York" );

my $c = dec23( 7, "floating" );

printf "A is %s B\n", $a < $b ? "less than" : "not less than";

printf "B is %s C\n", $b < $c ? "less than" : "not less than";

printf "C is %s A\n", $c < $a ? "less than" : "not less than";

With DateTime 1.04, this prints:

A is less than B

B is less than C

C is less than A

There are non-transitive relations in the world, but comparison of

times is not among them. And if your relation is not transitive, you

have no business binding it to the < operator.

However...

Rik Signes points out that the manual says:

If you are planning to use any objects with a real time zone, it is strongly recommended that you do not mix these with floating datetimes.

However, while a disclaimer in the manual can document incorrect

behavior, it does not annul it. A bug doesn't stop being a bug just

because you document it in the manual. I think it would have been

possible to implement floating times sanely, but DateTime didn't do

that.

[ Addendum: Rik has now brought to my attention that while the main

->new constructor defaults to the "floating" time zone, the ->now

method always returns the current time in the UTC zone, which seems to

me to be a mockery of the advice not to mix the two. ]

[Other articles in category /prog/perl] permanent link

Mon, 16 Dec 2013

Moonpig: a billing system that doesn't suck

I'm in Amsterdam now, because Booking.com brought me out to tell them

about Moonpig, the billing and accounting system that Rik Signes and I

wrote. The talk was mostly a rehash of one I gave a Pittsburgh Perl

Workshop a couple of months ago, but I think it's of general interest.

The assumption behind the talk is that nobody wants to hear about how the billing system actually works, because most people either have their own billing system already or else don't need one at all. I think I could do a good three-hour talk about the internals of Moonpig, and it would be very interesting to the right group of people, but it would be a small group. So instead I have this talk, which lasts less than an hour. The takeaway from this talk is a list of several basic design decisions that Rik and I made while building Moonpig which weren't obviously good ideas at the time, but which turned out well in hindsight. That part I think everyone can learn from. You may not ever need to write a billing system, but chances are at some point you'll consider using an ORM, and it might be useful to have a voice in your head that says “Dominus says it might be better to do something completely different instead. I wonder if this is one of those times?”

So because I think the talk was pretty good, and it's fresh in my mind right now, I'm going to try to write it down. The talk slides are here if you want to see them. The talk is mostly structured around a long list of things that suck, and how we tried to design Moonpig to eliminate, avoid, or at least mitigate these things.

- Times and time zones suck

- Floating-point arithmetic sucks

- It sucks to fix your mangled data after an automated process fails

- Testing a yearlong sequence of events sucks

- It sucks to have your automated test accidentally send a bunch of bogus invoices to the customers

- Rounding errors suck

- Relational databases usually suck

- Modeling objects in the RDB really really sucks

- Perl's garbage collection sucks

- OO inheritance sucks

Sometimes I see other people fuck up a project over and over, and I say “I could do that better”, and then I get a chance to try, and I discover it was a lot harder than I thought, I realize that those people who tried before are not as stupid as as I believed.

That did not happen this time. Moonpig is a really good billing system. It is not that hard to get right. Those other guys really were as stupid as I thought they were.

Brief explanation of IC Group

When I tell people I was working for IC Group, they frown; they haven't heard of it. But quite often when I say that IC Group runs pobox.com, those same people smile and say “Oh, pobox!”.ICG is a first wave dot-com. In the late nineties, people would often have email through their employer or their school, and then they would switch jobs or graduate and their email address would go away. The basic idea of pobox was that for a small fee, something like $15 per year, you could get a pobox.com address that would forward all your mail to your real email address. Then when you changed jobs or schools you could just tell pobox to change the forwarding record, and your friends would continue to send email to the same pobox.com address as before. Later, ICG offered mail storage, web mail, and, through listbox.com, mailing list management and bulk email delivery.

Moonpig was named years and years before the project to write it was started. ICG had a billing and accounting system already, a terrible one. ICG employees would sometimes talk about the hypothetical future accounting system that would solve all the problems of the current one. This accounting system was called Moonpig because it seemed clear that it would never actually be written, until pigs could fly.

And in fact Moonpig wouldn't have been written, except that the existing system severely constrained the sort of pricing structures and deals that could actually be executed, and so had to go. Even then the first choice was to outsource the billing and accounting functions to some company that specialized in such things. The Moonpig project was only started as a last resort after ICG's president had tried for 18 months to find someone to take over the billing and collecting. She was unsuccessful. A billing provider would seem perfect and then turn out to have some bizarre shortcoming that rendered it unsuitable for ICG's needs. The one I remember was the one that did everything we wanted, except it would not handle checks. “Don't worry,” they said. “It's 2010. Nobody pays by check any more.”

Well, as it happened, many of our customers, including some of the largest institutional ones, had not gotten this memo, and did in fact pay by check.

So with some reluctance, she gave up and asked Rik and me to write a replacement billing and accounting system.

As I mentioned, I had always wanted to do this. I had very clear ideas, dating back many years, about mistakes I would not make, were I ever called upon to write a billing system.

For example, I have many times received a threatening notice of this sort:

Your account is currently past due! Pay the outstanding balance of $ 0 . 00 or we will be forced to refer your account for collection.What I believe happened here is: some idiot programmer knows that money amounts are formatted with decimal points, so decides to denominate the money with floats. The amount I paid rounds off a little differently than the amount I actually owed, and the result after subtraction is all roundoff error, and leaves me with a nominal debt on the order of !!2^{-64}!! dollars.

So I have said to myself many times “If I'm ever asked to write a billing system, it's not going to use any fucking floats.” And at the meeting at which the CEO told me and Rik that we would write it, those were nearly the first words out of my mouth: No fucking floats.

Moonpig conceptual architecture

I will try to keep this as short as possible, including only as much as is absolutely required to understand the more interesting and generally applicable material later.

Pobox and Listbox accounts

ICG has two basic use cases. One is Pobox addresses and mailboxes, where the customer pays us a certain amount of money to forward (or store) their mail for a certain amount of time, typically a year. The other is Listbox mailing lists, where the customer pays us a certain amount to attempt a certain number of bulk email deliveries on their behalf.

The basic model is simple…

The life cycle for a typical service looks like this: The customer pays us some money: a flat fee for a Pobox account, or a larger or smaller pile for Listbox bulk mailing services, depending on how much mail they need us to send. We deliver service for a while. At some point the funds in the customer's account start to run low. That's when we send them an invoice for an extension of the service. If they pay, we go back and continue to provide service and the process repeats; if not, we stop providing the service.

…just like all basic models

But on top of this basic model there are about 10,019 special cases:

Customers might cancel their service early.

Pobox has a long-standing deal where you get a sixth year free if you pay for five years of service up front.

Sometimes a customer with only email forwarding ($20 per year) wants to upgrade their account to one that does storage and provides webmail access ($50 per year), or vice-versa, in the middle of a year. What to do in this case? Business rules dictate that they can apply their current balance to the new service, and it should be properly pro-rated. So if I have 64 days of $50-per-year service remaining, and I downgrade to the $20-per-year service, I now have 160 days of service left.

Well, that wasn't too bad, except that we should let the customer know the new expiration date. And also, if their service will now expire sooner than it would have, we should give them a chance to pay to extend the service back to the old date, and deal properly with their payment or nonpayment.

Also something has to be done about any 6th free year that I might have had. We don't want someone to sign up for 5 years of $50-per-year service, get the sixth year free, then downgrade their account and either get a full free year of $50-per-year service or get a full free year of $20-per-year service after only !!\frac{20}{50}!! of five full years.

Sometimes customers do get refunds.

Sometimes we screw up and give people a credit for free service, as an apology. Unlike regular credits, these are not refundable!

Some customers get gratis accounts. The other cofounder of ICG used to hand these out at parties.

There are a number of cases for coupons and discounts. For example, if you refer a friend who signs up, you get some sort of credit. Non-profit institutions get some sort of discount off the regular rates. Customers who pay for many accounts get some sort of bulk discount. I forget the details.

Most customers get their service cut off if they don't pay. Certain large and longstanding customers should not be treated so peremptorily, and are allowed to run a deficit.

And so to infinity and beyond.

Ledgers and Consumers

The Moonpig data store is mostly organized as a huge pile of ledgers. Each represents a single customer or account. It contains some contact information, a record of all the transactions associated with that customer, a history of all the invoices ever sent to that customer, and so forth.A ledger also contains some consumer objects. Each consumer represents some service that we have promised to perform in exchange for money. The consumer has methods in it that you can call to say “I just performed a certain amount of service; please charge accordingly”. It has methods for calculating how much money has been allotted to it, how much it has left, how fast it is consuming its funds, how long it expects to last, and when it expects to run out of money. And it has methods for constructing its own replacement and for handing over control to that replacement when necessary.

Heartbeats

Every day, a cron job sends a heartbeat event to each ledger. The ledger doesn't do anything with the heartbeat itself; its job is to propagate the event to all of its sub-components. Most of those, in turn, ignore the heartbeat event entirely.But consumers do handle heartbeats. The consumer will wake up and calculate how much longer it expects to live. (For Pobox consumers, this is simple arithmetic; for mailing-list consumers, it guesses based on how much mail has been sent recently.) If it notices that it is going to run out of money soon, it creates a successor that can take over when it is gone. The successor immediately sends the customer an invoice: “Hey, your service is running out, do you want to renew?”

Eventually the consumer does run out of money. At that time it hands over responsibility to its replacement. If it has no replacement, it will expire, and the last thing it does before it expires is terminate the service.

Things that suck: manual repairs

Somewhere is a machine that runs a daily cron job to heartbeat each ledger. What if one day, that machine is down, as they sometimes are, and the cron job never runs?Or what if the machine crashes while the cron job is running, and the cron job only has time to heartbeat 3,672 of the 10,981 ledgers in the system?

In a perfect world, every component would be able to depend on exactly one heartbeat arriving every day. We don't live in that world. So it was an ironclad rule in Moonpig development that anything that handles heartbeat events must be prepared to deal with missing heartbeats, duplicate heartbeats, or anything else that could screw up.

When a consumer gets a heartbeat, it must not cheerfully say "Oh, it's the dawn of a new day! I'll charge for a day's worth of service!". It must look at the current date and at its own charge record and decide on that basis whether it's time to charge for a day's worth of service.

Now the answers to those questions of a few paragraphs earlier are quite simple. What if the machine is down and the cron job never runs? What to do?

A perfectly acceptable response here is: Do nothing. The job will run the next day, and at that time everything will be up to date. Some customers whose service should have been terminated today will have it terminated tomorrow instead; they will have received a free day of service. This is an acceptable loss. Some customers who should have received invoices today will receive them tomorrow. The invoices, although generated and sent a day late, will nevertheless show the right dates and amounts. This is also an acceptable outcome.

What if the cron job crashes after heartbeating 3,672 of 10,981 ledgers? Again, an acceptable response is to do nothing. The next day's heartbeat will bring the remaining 7,309 ledgers up to date, after which everything will be as it should. And an even better response is available: simply rerun the job. 3,672 of the ledgers will receive the same event twice, and will ignore it the second time.

Contrast this with the world in which heartbeats were (mistakenly) assumed to be reliable. In this world, the programming staff must determine precisely which ledgers received the event before the crash, either by trawling through the log files or by grovelling over the ledger data. Then someone has to hack up a program to send the heartbeats to just the 7,309 ledgers that still need it. And there is a stiff deadline: they have to get it done before tomorrow's heartbeat issues!

Making everything robust in the face of heartbeat failure is a little more work up front, but that cost is recouped the first time something goes wrong with the heartbeat process, when instead of panicking you smile and open another beer. Let N be the number of failures and manual repairs that are required before someone has had enough and makes the heartbeat handling code robust. I hypothesize that you can tell a lot about an organization from the value of N.

Here's an example of the sort of code that is required. The non-robust version of the code would look something like this:

sub charge {

my ($self, $event) = @_;

$self->charge_one_day();

}

The code, implemented by a role called

Moonpig::Role::Consumer::ChargesPeriodically, actually looks

something like this:

has last_charge_date => ( … );

sub charge {

my ($self, $event) = @_;

my $now = Moonpig->env->now;

CHARGE: until ($self->next_charge_date->follows($now)) {

my $next = $self->next_charge_date;

$self->charge_one_day();

$self->last_charge_date($next);

if ($self->is_expired) {

$self->replacement->handle_event($event) if $self->replacement;

last CHARGE;

}

}

}

The last_charge_date member records the last time the

consumer actually issued a charge. The next_charge_date

method consults this value and returns the next day on which the

consumer should issue a charge—not necessarily the following

day, since the consumer might issue weekly or monthly charges. The

consumer will issue charge after charge until the

next_charge_date is the future, when it will stop. It runs

the until loop, using charge_one_day to issue

another charge each time through, and updating

last_charge_date each time, until the

next_charge_date is in the future. The one tricky part here the if block. This is because the consumer might run out of money before the loop completes. In that case it passes the heartbeat event on to its successor (replacement) and quits the loop. The replacement will run its own loop for the remaining period.

Things that suck: real-time testing

A customer pays us $20. This will cover their service for 365 days. The business rules say that they should receive their first invoice 30 days before the current service expires; that is, after 335 days. How are we going to test that the invoice is in fact sent precisely 335 days later?Well, put like that, the answer is obvious: Your testing system must somehow mock the time. But obvious as this is, I have seen many many tests that made some method call and then did sleep 60, waiting and hoping that the event they were looking for would have occurred by then, reporting a false positive if the system was slow, and making everyone that much less likely to actually run the tests.

I've also seen a lot of tests that crossed their fingers and hoped that a certain block of code would execute between two ticks of the clock, and that failed nondeterministically when that didn't happen.

So another ironclad law of Moonpig design was that no object is ever allowed to call the time() function to find out what time it actually is. Instead, to get the current time, the object must call Moonpig->env->now.

The tests run in a test environment. In the test environment, Moonpig->env returns a Moonpig::Env::Test object, which contains a fake clock. It has a stop_clock method that stops the clock, and an elapse_time method that forces the clock forward a certain amount. If you need to check that something happens after 40 days, you can call Moonpig->env->elapse_time(86_400 * 40), or, more likely:

for (1..40) {

Moonpig->env->elapse_time(86_400);

$test_ledger->heartbeat;

}

In the production environment, the environment object still has a

now method, but one that returns the true current time from

the system clock. Trying to stop the clock in the production

environment is a fatal error.Similarly, no Moonpig object ever interacts directly with the database; instead it must always go through the mediator returned by Moonpig->env->storage. In tests, this can be a fake storage object or whatever is needed. It's shocking how many tests I've seen that begin by allocating a new MySQL instance and executing a huge pile of DDL. Folks, this is not how you write a test.

Again, no Moonpig object ever posts email. It asks Moonpig->env->email_sender to post the email on its behalf. In tests, this uses the CPAN Email::Sender::Transport suite, and the test code can interrogate the email_sender to see exactly what emails would have been sent.

We never did anything that required filesystem access, but if we had, there would have been a Moonpig->env->fs for opening and writing files.

The Moonpig->env object makes this easy to get right, and hard to screw up. Any code that acts on the outside world becomes a red flag: Why isn't this going through the environment object? How are we going to test it?

Things that suck: floating-point numbers

I've already complained about how I loathe floating-point numbers. I just want to add that although there are probably use cases for floating-point arithmetic, I don't actually know what they are. I've had a pretty long and varied programming career so far, and legitimate uses for floating point numbers seem very few. They are really complicated, and fraught with traps; I say this as a mathematical expert with a much stronger mathematical background than most programmers.The law we adopted for Moonpig was that all money amounts are integers. Each money amount is an integral number of “millicents”, abbreviated “m¢”, worth !!\frac1{1000}!! of a cent, which in turn is !!\frac1{100}!! of a U.S. dollar. Fractional millicents are not allowed. Division must be rounded to the appropriate number of millicents, usually in the customer's favor, although in practice it doesn't matter much, because the amounts are so small.

For example, a $20-per-year Pobox account actually bills $$\$\left\lfloor\frac{20,00,000}{365}\right\rfloor = 5479$$ m¢ each day. (5464 in leap years.)

Since you don't want to clutter up the test code with a bunch of numbers like 1000000 ($10), there are two utterly trivial utility subroutines:

sub cents { $_[0] * 1000 }

sub dollars { $_[0] * 1000 * 100 }

Now $10 can be written dollars(10).Had we dealt with floating-point numbers, it would have been tempting to write test code that looked like this:

cmp_ok(abs($actual_amount - $expected_amount), "<", $EPSILON, …);

That's because with floats, it's so hard to be sure that you won't end

up with a leftover !!2^{-64}!! or something, so you

write all the tests to ignore small discrepancies. This can lead to

overlooking certain real errors that happen to result in small

discrepancies. With integer amounts, these discrepancies have nowhere

to hide. It sometimes happened that we would write some test and the

money amount at the end would be wrong by 2m¢. Had we been using

floats, we might have shrugged and attributed this to incomprehensible

roundoff error.

But with integers, that is a difference of 2, and you cannot shrug it

off. There is no incomprehensible roundoff error.

All the calculations are exact, and if some integer is off by 2

it is for a reason. These tiny discrepancies usually pointed to

serious design or implementation errors. (In contrast, when a test

would show a gigantic discrepancy of a million or more m¢, the bug was

always quite easy to find and fix.)There are still roundoff errors; they are unavoidable. For example, a consumer for a $20-per-year Pobox account bills only 365·5479m¢ = 1999835m¢ per year, an error in the customer's favor of 165m¢ per account; after 12,121 years the customer will have accumulated enough error to pay for an extra year of service. For a business of ICG's size, this loss was deemed acceptable. For a larger business, it could be significant. (Imagine 6,000,000 customers times 165m¢ each; that's $9,900.) In such a case I would keep the same approach but denominate everything in micro-cents instead.

Happily, Moonpig did not have to deal with multiple currencies. That would have added tremendous complexity to the financial calculations, and I am not confident that Rik and I could have gotten it right in the time available.

Things that suck: dates and times

Dates and times are terribly complicated, partly because the astronomical motions they model are complicated, and mostly because the world's bureaucrats keep putting their fingers in. It's been suggested recently that you can identify whether someone is a programmer by asking if they have an opinion on time zones. A programmer will get very red in the face and pound their fist on the table.After I wrote that sentence, I then wrote 1,056 words about the right way to think about date and time calculations, which I'll spare you, for now. I'm going to try to keep this from turning into an article about all the ways people screw up date and time calculations, by skipping the arguments and just stating the main points:

- Date-time values are a kind of number, and should be

considered as such. In particular:

- Date-time values inside a program should be immutable

- There should be a single canonical representation of date-time values in the program, and it should be chosen for ease of calculation.

- If the program does have to deal with date-time values in some other representation, it should convert them to the canonical representation as soon as possible, or from the canonical representation as late as possible, and in any event should avoid letting non-canonical values percolate around the program.

We held our noses when we chose to use DateTime. It has my grudging approval, with a large side helping of qualifications. The internal parts of it are okay, but the methods it provides are almost never what you actually want to use. For example, it provides a set of mutators. But, as per item 1 above, date-time values are numbers and ought to be immutable. Rik has a good story about a horrible bug that was caused when he accidentally called the ->subtract method on some widely-shared DateTime value and so mutated it, causing an unexpected change in the behavior of widely-separated parts of the program that consulted it afterward.

So instead of using raw DateTime, we wrapped it in a derived class called Moonpig::DateTime. This removed the mutators and also made a couple of other convenient changes that I will shortly describe.

Things that really really suck: DateTime::Duration

If you have a pair of DateTime objects and you want to know how much time separates the two instants that they represent, you have several choices, most of which will return a DateTime::Duration object. All those choices are wrong, because DateTime::Duration objects are useless. They are a kind of Roach Motel for date and time information: Data checks into them, but doesn't check out. I am not going to discuss that here, because if I did it would take over the article, but I will show the simple example I showed in the talk:

my $then = DateTime->new( month => 4, day => 2, year => 1969,

hour => 0, minute => 0, second => 0);

my $now = DateTime->now();

my $elapsed = $now - $then;

print $elapsed->in_units('seconds'), "\n";

You might think, from looking at this code, that it might print the

number of seconds that elapsed between 1969-04-02 00:00:00 (in some

unspecified time zone!) and the current moment. You would be

mistaken; you have failed to reckon with the $elapsed object, which is a

DateTime::Duration. Computing this object seems reasonable, but as far as I know once you

have it there is nothing to do but throw it away and

start over, because there is no way to extract from it the elapsed amount of time, or indeed

anything else of value.

In any event, the print here does not print the

correct number of seconds. Instead it prints ME CAGO

EN LA LECHE, which I have discovered is Spanish for “I shit in

the milk”.So much for DateTime::Duration. When a and b are Moonpig::DateTime objects, a-b returns the number of seconds that have elapsed between the two times; it is that simple. You can divide it by 86,400 to get the number of days.

Other arithmetic is similarly overloaded: If i is a number, then a+i and a-i are the times obtained by adding or subtracting i seconds to a, respectively.

(C programmers should note the analogy with pointer arithmetic; C's pointers, and date-time values—also temperatures—are examples of a mathematical structure called an affine space, and study of the theory of affine spaces tells you just what rules these objects should obey. I hope to discuss this at length another time.)

Going along with this arithmetic are a family of trivial convenience functions, such as:

sub hours { $_[0] * 3600 }

sub days { $_[0] * 86400 }

so that you can use $a + days(7) to find the time 7 days

after $a. Programmers at the Amsterdam talk were worried about this:

what about leap seconds? And they are correct: the name days

is not quite honest, because it promises, but does not deliver, exactly

7 days. It can't, because the definition of the day varies widely from

place to place and time to time, and not only can't you know how long

7 days unless you know where it is, but it doesn't even make

sense to ask. That is all right. You just have to be aware, when

you add days(7), the resulting time might not be the same

time of day 7 days later. (Indeed, if the local date and time laws

are sufficiently bizarre, it could in principle be completely wrong. But

since Moonpig::DateTime objects are always reckoned in UTC, it is never more than

one second wrong.)Anyway, I was afraid that Moonpig::DateTime would turn out to be a leaky abstraction, producing pleasantly easy and correct results thirty times out of thirty-one, and annoyingly wrong or bizarre results the other time. But I was surprised: it never caused a problem, or at least none has come to light. I am working on releasing this module to CPAN, under the name DateTime::Moonpig. [ Addendum: DateTime::Moonpig is now available on CPAN. ]

Things that suck: mutable data

I left this out of the talk, by mistake, but this is a good place to mention it: mutable data is often a bad idea. In the billing system we wanted to avoid it for accountability reasons: We never wanted the customer service agent to be in the position of being unable to explain to the customer why we thought they owed us $28.39 instead of the $28.37 they claimed they owed; we never wanted ourselves to be in the position of trying to track down a billing system bug only to find that the trail had been erased.One of the maxims Rik and I repeated freqently was that the moving finger writes, and, having writ, moves on. Moonpig is full of methods with names like is_expired, is_superseded, is_canceled, is_closed, is_obsolete, is_abandoned and so forth, representing entities that have been replaced by other entities but which are retained as part of the historical record.

For example, a consumer has a successor, to which it will hand off responsibility when its own funds are exhausted; if the customer changes their mind about their future service, this successor might be replaced with a different one, or replaced with none. This doesn't delete or destroy the old successor. Instead it marks the old successor as "superseded", simultaneously recording the supersession time, and pushes the new successor (or undef, if none) onto the end of the target consumer's replacement_history array. When you ask for the current successor, you are getting the final element of this array. This pattern appeared in several places. In a particularly simple example, a ledger was required to contain a Contact object with contact information for the customer to which it pertained. But the Contact wasn't simply this:

has contact => (

is => 'rw',

isa => role_type( 'Moonpig::Role::Contact' ),

required => 1,

);

Instead, it was an array; "replacing" the contact actually pushed the

new contact onto the end of the array, from which the contact

accessor returned the final element:

has contact_history => (

is => 'ro',

isa => ArrayRef[ role_type( 'Moonpig::Role::Contact' ) ],

required => 1,

traits => [ 'Array' ],

handles => {

contact => [ get => -1 ],

replace_contact => 'push',

},

);

Similarly, what happens if we send the customer an invoice for three services, and they inform customer service that they want to continue two of the services but cancel the third? We need to throw away the old invoice, which will never be paid, and issue a new one. The old invoice remains in the system, marked "abandoned", with a pointer to the new invoice.

Things that suck: relational databases

Why do we use relational databases, anyway? Is it because they cleanly and clearly model the data we want to store? No, it's because they are lightning fast.When your data truly is relational, a nice flat rectangle of records, each with all the same fields, RDBs are terrific. But Moonpig doesn't have much relational data. It basic datum is the Ledger, which has a bunch of disparate subcomponents, principally a heterogeneous collection of Consumer objects. And I would guess that most programs don't deal in relational data; Like Moonpig, they deal in some sort of object network.

Nevertheless we try to represent this data relationally, because we have a relational database, and when you have a hammer, you go around hammering everything with it, whether or not that thing needs hammering.

When the object model is mature and locked down, modeling the objects relationally can be made to work. But when the object model is evolving, it is a disaster. Your relational database schema changes every time the object model changes, and then you have to find some way to migrate the existing data forward from the old schema. Or worse, and more likely, you become reluctant to let the object model evolve, because reflecting that evolution in the RDB is so painful. The RDB becomes a ball and chain locked to your program's ankle, preventing it from going where it needs to go. Every change is difficult and painful, so you avoid change. This is the opposite of the way to design a good program. A program should be light and airy, its object model like a string of pearls.

In theory the mapping between the RDB and the objects is transparent, and is taken care of seamlessly by an ORM layer. That would be an awesome world to live in, but we don't live in it and we may never.

Things that really really suck: ORM software

Right now the principal value of ORM software seems to be if your program is too fast and you need it to be slower; the ORM is really good at that. Since speed was the only benefit the RDB was providing in the first place, you have just attached two large, complex, inflexible systems to your program and gotten nothing in return.Watching the ORM try to model the objects is somewhere between hilariously pathetic and crushingly miserable. Perl's DBIx::Class, to the extent it succeeds, succeeds because it doesn't even try to model the objects in the database. Instead it presents you with objects that represent database rows. This isn't because a row needs to be modeled as an object—database rows have no interesting behavior to speak of—but because the object is an access point for methods that generate SQL. DBIx::Class is not for modeling objects, but for generating SQL. I only realized this recently, and angrily shouted it at the DBIx::Class experts, expecting my denunciation to be met with rage and denial. But they just smiled with amusement. “Yes,” said the DBIx::Class experts on more than one occasion, “that is exactly correct.” Well then.

So Rik and I believe that for most (or maybe all) projects, trying to store the objects in an RDB, with an ORM layer mediating between the program and the RDB, is a bad, bad move. We determined to do something else. We eventually brewed our own object store, and this is the part of the project of which I'm least proud, not because the object store itself was a bad idea, but because I believe we probably made every possible mistake that could be made, even the ones that everyone writing an object store should already know not to make.

For example, the object store has a method, retrieve_ledger, which takes a ledger's ID number, reads the saved ledger data from the disk, and returns a live Ledger object. But it must make sure that every such call returns not just a Ledger object with the right data, but the same object. Otherwise two parts of the program will have different objects to represent the same data, one part will modify its object, and the other part, looking at a different object, will not see the change it should see. It took us a while to figure out problems like this; we really did not know what we were doing.

What we should have done, instead of building our own object store, was use someone else's object store. KiokuDB is frequently mentioned in this context. After I first gave this talk people asked “But why didn't you use KiokuDB?” or, on hearing what we did do, said “That sounds a lot like KiokuDB”. I had to get Rik to remind me why we didn't use KiokuDB. We had considered it, and decided to do our own not for technical but for political reasons. The CEO, having made the unpleasant decision to have me and Rik write a new billing system, wanted to see some progress. If she had asked us after the first week what we had accomplished, and we had said “Well, we spent a week figuring out KiokuDB,” her head might have exploded. Instead, we were able to say “We got the object store about three-quarters finished”. In the long run it was probably more expensive to do it ourselves, and the result was certainly not as good. But in the short run it kept the customer happy, and that is the most important thing; I say this entirely in earnest, without either sarcasm or bitterness.

(On the other hand, when I ran this article by Rik, he pointed out that KiokuDB had later become essentially unmaintained, and that had we used it he would have had to become the principal maintainer of a large, complex system which which he did not help design or implement. The Moonpig object store may be technically inferior, but Rik was with it from the beginning and understands it thoroughly.)

Our object store

All that said, here is how our object store worked. The bottom layer was an ordinary relational database with a single table. During the test phase this database was SQLite, and in production it was IC Group's pre-existing MySQL instance. The table had two fields: a GUID (globally-unique identifier) on one side, and on the other side a copy of the corresponding Ledger object, serialized with Perl's Storable module. To retrieve a ledger, you look it up in the table by GUID. To retrieve a list of all the ledgers, you just query the GUID field. That covers the two main use-cases, which are customer service looking up a customer's account history, and running the daily heartbeat job. A subsidiary table mapped IC Group's customer account numbers to ledger GUIDs, so that the storage engine could look up a particular customer's ledger starting from their account number. (Account numbers are actually associated with Consumers. Once you have the right ledger a simple method call to the ledger will retrieve the consumer object, but finding the right ledger requires a table.) There were a couple of other tables of that sort, but overall it was a small thing.There are some fine points to consider. For example, you can choose whether to store just the object data, or the code as well. The choice is clear: you must store only the data, not the code. Otherwise, you would have to update all the stored objects every time you made a code change such as a bug fix. It should be clear that this would have discouraged bug fixes, and that had we gone this way the project would have ended as a pile of smoking rubble. Since the code is not stored in the database, the object store must be responsible, whenever it loads an object, for making sure that the correct class for that object actually exists. The solution for this was that along with every object is stored a list of all the roles that it must perform. At object load time, if the object's class doesn't exist yet, the object store retrieves this list of roles (stored in a third column, parallel to the object data) and uses the MooseX::ClassCompositor module to create a new class that does those roles. MooseX::ClassCompositor was something Rik wrote for the purpose, but it seems generally useful for such applications.

Every once in a while you may make an upward-incompatible change to the object format. Renaming an object field is such a change, since the field must be renamed in all existing objects, but adding a new field isn't, unless the field is mandatory. When this happened—much less often than you might expect—we wrote a little job to update all the stored objects. This occurred only seven times over the life of the project; the update programs are all very short.

We did also make some changes to the way the objects themselves were stored: Booking.Com's Sereal module was released while the project was going on, and we switched to use it in place of Storable. Also one customer's Ledger object grew too big to store in the database field, which could have been a serious problem, but we were able to defer dealing with the problem by using gzip to compress the serialized data before storing it.

The relational database provides transactions

The use of the RDB engine for the underlying storage got us MySQL's implementation of transactions and atomicity guarantees, which we trusted. This gave us a firm foundation on which to build the higher functions; without those guarantees you have nothing, and it is impossible to build a reliable system. But since they are there, we could build a higher-level transactional system on top of them.For example, we used an opportunistic locking scheme to prevent race conditions while updating a single ledger. For performance reasons you typically don't want to force all updates to be done through a single process (although it can be made to work; see Rochkind's Advanced Unix Programming). In an optimistic locking scheme, you store a version number with each record. Suppose you are the low-level storage manager and you get a request to update a ledger with a certain ID. Instead of doing this:

update ledger set serialized_data = …

where ledger_id = 789

You do this:

update ledger set serialized_data = …

, version = 4

where ledger_id = 789 and version = 3

and you check the return value from the SQL to see how many records

were actually updated. The answer must be 0 or 1. If it is 1, all is

well and you report the successful update back to your caller. But if

it is 0, that means that some other process got there first and

updated the same ledger, changing its version number from the 3 you

were expecting to something bigger. Your changes are now in limbo;

they were applied to a version of the object that is no longer current, so

you throw an exception. But is the exception safe? What if the caller had previously made changes to the database that should have been rolled back when the ledger failed to save? No problem! We had exposed the RDB transactions to the caller, so when the caller requested that a transaction be begun, we propagated that request into the RDB layer. When the exception aborted the caller's transaction, all the previous work we had done on its behalf was aborted back to the start of the RDB transaction, just as one wanted. The caller even had the option to catch the exception without allowing it to abort the RDB transaction, and to retry the failed operation.

Drawbacks of the object store

The major drawback of the object store was that it was very difficult to aggregate data across ledgers: to do it you have to thaw each ledger, one at a time, and traverse its object structure looking for the data you want to aggregate. We planned that when this became important, we could have a method on the Ledger or its sub-objects which, when called, would store relevant numeric data into the right place in a conventional RDB table, where it would then be available for the usual SELECT and GROUP BY operations. The storage engine would call this whenever it wrote a modified Ledger back to the object store. The RDB tables would then be a read-only view of the parts of the data that were needed for building reports.A related problem is some kinds of data really are relational and to store them in object form is extremely inefficient. The RDB has a terrible impedance mismatch for most kinds of object-oriented programming, but not for all kinds. The main example that comes to mind is that every ledger contains a transaction log of every transaction it has ever performed: when a consumer deducts its 5479 m¢, that's a transaction, and every day each consumer adds one to the ledger. The transaction log for a large ledger with many consumers can grow rapidly.

We planned from the first that this transaction data would someday move out of the ledger entirely into a single table in the RDB, access to which would be mediated by a separate object, called an Accountant. At present, the Accountant is there, but it stores the transaction data inside itself instead of in an external table.

The design of the object store was greatly simplified by the fact that all the data was divided into disjoint ledgers, and that only ledgers could be stored or retrieved. A minor limitation of this design was that there was no way for an object to contain a pointer to a Ledger object, either its own or some other one. Such a pointer would have spoiled Perl's lousy garbage collection, so we weren't going to do it anyway. In practice, the few places in the code that needed to refer to another ledger just store the ledger's GUID instead and looked it up when it was needed. In fact every significant object was given its own GUID, which was then used as needed. This was Rik's strategy, and it was a good one. I was surprised to find how often it was useful to have a simple, reliable identifier for every object, and how much time I had formerly spent on programming problems that would have been trivially solved if objects had had GUIDs.

The object store was a success

In all, I think the object store technique worked well and was a smart choice that went strongly against prevailing practice. I would recommend the technique for similar projects, except for the part where we wrote the object store ourselves instead of using one that had been written already. Had we tried to use an ORM backed by a relational database, I think the project would have taken at least a third longer; had we tried to use an RDB without any ORM, I think we would not have finished at all.

Things that suck: multiple inheritance

After I had been using Moose for a couple of years, including the Moonpig project, Rik asked me what I thought of it. I was lukewarm. It introduces a lot of convenience for common operations, but also hides a lot of complexity under the hood, and the complexity does not always stay well-hidden. It is very big and very slow to start up. On the whole, I said, I could take it or leave it.“Oh,” I added. “Except for Roles. Roles are awesome.” I had a long section in the talk about what is good about Roles, but I moved it out to a separate talk, so I am going to take that as a hint about what I should do here. As with my theory of dates and times, I will present only the thesis, and save the arguments for another post:

- Object-oriented programming is centered around objects, which are encapsulated groups of related data, and around methods, which are opaque functions for operating on particular kinds of objects.

- OOP does not mandate any particular theory of inheritance, either single or multiple, class-based or prototype based, etc., and indeed, while all OOP systems have objects and methods that are pretty much the same, each has an inheritance system all its own.

- Over the past 30 years of OOP, many theories of inheritance have been tried, and all of them have had serious problems.

- If there were no alternative to inheritance, we would have to

struggle on with inheritance. However, Roles are a good alternative to inheritance:

- Every problem solved by inheritance is solved at least as well by Roles.

- Many problems not solved at all by inheritance are solved by Roles.

- Many problems introduced by inheritance do not arise when using Roles.

- Roles introduce some of their own problems, but none of them are as bad as the problems introduced by inheritance.

- It's time to give up on inheritance. It was worth a try; we tried it as hard as we could for thirty years or more. It didn't work.

- I'm going to repeat that: Inheritance doesn't work. It's time to give up on it.

I plan to write more extensively on this later on.

This section is the end of the things I want to excoriate. Note the transition from multiple inheritance, which was a tremendous waste of everyone's time, to Roles, which in my opinion are a tremendous success, the Right Thing, and gosh if only Smalltalk-80 had gotten this right in the first place look how much trouble we all would have saved.

Things that are GOOD: web RPC APIs

Moonpig has a web API. Moonpig applications, such as the customer service dashboard, or the heartbeat job, invoke Moonpig functions through the API. The API is built using a system, developed in parallel with Moonpig, called Stick. (It was so-called because IC Group had tried before to develop a simple web API system, but none had been good enough to stick. This one, we hoped, would stick.)The basic principle of Stick is distributed routing, which allows an object to have a URI, and to delegate control of the URIs underneath it to other objects.

To participate in the web API, an object must compose the Stick::Role::Routable role, which requires that it provide a _subroute method. The method is called with an array containing the path components of a URI. The _subroute method examines the array, or at least the first few elements, and decides whether it will handle the route. To refuse, it can throw an exception, or just return an undefined value, which will turn into a 404 error in the web protocol. If it does handle the path, it removes the part it handled from the array, and returns another object that will handle the rest, or, if there is nothing left, a public resource of some sort. In the former case the routing process continues, with the remaining route components passed to the _subroute method of the next object.

If the route is used up, the last object in the chain is checked to make sure it composes the Stick::Role::PublicResource role. This is to prevent accidentally exposing an object in the web API when it should be private. Stick then invokes one final method on the public resource, either resource_get, resource_post, or similar. Stick collects the return value from this method, serializes it and sends it over the network as the response.

So for example, suppose a ledger wants to provide access to its consumers. It might implement _subroute like this:

sub _subroute {

my ($self, $route) = @_;

if ($route->[0] eq "consumer") {

shift @$route;

my $consumer_id = shift @$route;

return $self->find_consumer( id => $consumer_id );

} else {

return; # 404

}

}

Then if /path/to/ledger is any URI that leads to a certain

ledger, /path/to/ledger/consumer/12435 will be a valid URI

for the specified ledger's consumer with ID 12345. A request to

/path/to/ledger/FOOP/de/DOOP will yield a 404 error, as will

a request to /path/to/ledger/consumer/98765 whenever

find_consumer(id => 98765) returns undefined.A common pattern is to have a path that invokes a method on the target object. For example, suppose the ledger objects are already addressable at certain URIs, and one would like to expose in the API the ability to tell a ledger to handle a heartbeat event. In Stick, this is incredibly easy to implement:

publish heartbeat => { -http_method => 'post' } => sub {

my ($self) = @_;

$self->handle_event( event('heartbeat') );

};

This creates an ordinary method, called heartbeat, which can

be called in the usual way, but which is also invoked whenever an HTTP

POST request arrives at the appropriate URI, the appropriate URI being

anything of the form /path/to/ledger/heartbeat.The default case for publish is that the method is expected to be GET; in this case one can omit mentioning it:

publish amount_due => sub {

my ($self) = @_;

…

return abs($due - $avail);

};

More complicated published methods may receive arguments; Stick takes care of

deserializing them, and checking that their types are correct, before

invoking the published method. This is the ledger's method for updating its

contact information:

publish _replace_contact => {

-path => 'contact',

-http_method => 'put',

attributes => HashRef,

} => sub {

my ($self, $arg) = @_;

my $contact = class('Contact')->new($arg->{attributes});

$self->replace_contact($contact);

return $contact;

};

Although the method is named _replace_contact, is is

available in the web API via a PUT request to /path/to/ledger/contact,

rather than one to /path/to/ledger/_replace_contact.

If the contact information supplied in the HTTP request data is accepted by class('Contact')->new, the

ledger's contact is updated. (class('Contact') is a

utility method that returns the name of the class that represents

a contact. This is probably just the string Moonpig::Class::Contact.)In some cases the ledger has an entire family of sub-objects. For example, a ledger may have many consumers. In this case it's also equipped with a "collection" object that manages the consumers. The ledger can use the collection object as a convenient way to look up its consumers when it needs them, but the collection object also provides routing: If the ledger gets a request for a route that begins /consumers, it strips off /consumers and returns its consumer collection object, which handles further paths such as /guid/XXXX and /xid/1234 by locating and returning the appropriate consumer.

The collection object is a repository for all sorts of convenient behavior. For example, if one composes the Stick::Role::Collection::Mutable role onto it, it gains support for POST requests to …/consumers/add, handled appropriately.

Adding a new API method to any object is trivial, just a matter of adding a new published method. Unpublished methods are not accessible through the web API.

After I wrote this talk I wished I had written a talk about Stick instead. I'm still hoping to write one and present it at YAPC in Orlando this summer.

Things that are GOOD: Object-oriented testing

Unit tests often have a lot of repeated code, to set up test instances or run the same set of checks under several different conditions. Rik's Test::Routine makes a test program into a class. The class is instantiated, and the tests are methods that are run on the test object instance. Test methods can invoke one another. The test object's attributes are available to the test methods, so they're a good place to put test data. The object's initializer can set up the required test data. Tests can easily load and run other tests, all in the usual ways. If you like OO-style programming, you'll like all the same things about building tests with Test::Routine.

Things that are GOOD: Free software

All this stuff is available for free under open licenses:(This has been a really long article. Thanks for sticking with me. Headers in the article all have named anchors, in case you want to refer someone to a particular section.)

(I suppose there is a fair chance that this will wind up on Hacker News, and I know how much the kids at Hacker News love to dress up and play CEO and Scary Corporate Lawyer, and will enjoy posting dire tut-tuttings about whether my disclosure of ICG's secrets is actionable, and how reluctant they would be to hire anyone who tells such stories about his previous employers. So I may as well spoil their fun by mentioning that I received the approval of ICG's CEO before I posted this.)

[ Addendum: A detailed description of DateTime::Moonpig is now available. ]

[ Addendum 20140208: Jesper Andersen has written an account of a surprisingly similar system that he wrote in Erlang. ]

[ Addendum 20200319: In connection with “DBIx::Class is not for modeling objects, but for generating SQL”, see The Troublesome Active Record Pattern, which comes to similar conclusions as me, but more intelligently reasoned and with more technical detail. Paterson says “The only workable alternative is to make queries first class objects”. This is what DBIx::Class does. ]

[Other articles in category /prog] permanent link

Things do get better

I flew back from Amsterdam on Friday, and the plane had an in-flight

entertainment system that offered to show me movies or play me music.

That itself is a reasonable thing to try, I think, because the flight

is dull. But until this flight, I never felt that the promise had been

fulfilled. Usually, in my experience, these things offer four or five

awful movies that you can only imagine watching while strapped into a

chair Clockwork Orange style, and one canned selection of music from

each of nine genres. So the in-flight entertainment system is yet

another perpetrator of oppression by mass media and yet another

shovelful of the least-common denominator culture that mass media

fosters.

I don't think that least-common-denominator culture distributed by mass media is the worst evil perpetrated by the 20th century, but I do seriously think it is on the list of the top ten.

But not this time. Digital information technology has improved to the point that the in-flight system was able to offer me several dozen movies, a few of which I actually wanted to see, and a large selection of music, much more than I could possibly listen to during the seven-hour flight. And one of those selections was the 9th Symphony of Philip Glass.

I spent a large part of the flight alternately listening to the symphony, which I had not heard before, and marveling that it was there at all. “Who on earth,” I wondered, “thought it would be a good idea to put that in there?” I can't imagine there are that many people who want to listen to Philip Glass on a long airplane flight. But it seems that the technology has advanced to the point that the programming people have extra space they need to fill, so much extra space that it doesn't matter if they throw in some Philip Glass just in case, because why not?

I imagine it will get better from here too. Perhaps the next flight will offer me not just one selection from Philip Glass, but every possible selection from John Adams. But I think the in-flight entertainment system has crossed a critical threshold, and I will mock it no longer.

(My thanks to whatever crazy person decided to include Philip Glass on KLM flight 6053 last Friday. It brought me a lot of pleasure and helped pass the slow hours across the north Atlantic.)

[ Addendum 20150501: Unable to find a copy online, I asked my wife to get my a CD of the 9th Symphony for my birthday, and it is as wonderful as I remembered. Here's another way things got better: I put the CD into my laptop, to rip some MP3s from it, and discovered that Orange Mountain Music had saved me the trouble; the CD was pre-equipped with audio files in MP3, FLAC, and Ogg Vorbis format. ]

[Other articles in category /tech] permanent link

Thu, 12 Dec 2013

Conspiracy theories about Cory Doctorow

Recently I received (via William Gibson) a tweet from Cory Doctorow

suggesting that I sign this petition: Reform

ECPA: Tell the Government to Get a Warrant. The claim the petition makes is that

under the Electronic Communications Privay Act of 1986,

the IRS and hundreds of other agencies can read our communications without a warrant.I don't know if this is actually true, but it is certainly plausible; government agencies will interpret the law in whatever way is most convenient for them. In any case let's stipulate that this is correct.

The petition web site was put up by the Obama administration, with the promise that any petition that accumulated 100,000 signatures would receive a response. Not a decisive action, or even a substantive response, but a response. Even this very low bar has not always been met. But let's also stipulate that preparing and signing such a petition has some value, at least in making the Executive respond to a demand by a largish group of voters.

Here is the specific action called for by this petition:

We call on the Obama Administration to support ECPA reform and to reject any special rules that would force online service providers to disclose our email without a warrant.This is an extraordinarily weak demand, even by the already weak standards in play here.

If the main concern is that the IRS is demanding and receiving stored email, under the outdated provisions of the ECPA, there is a quick solution. The IRS is part of the Department of the Treasury, which is under the direct control of the President and his appointee the Secretary of the Treasury. The internal regulations of the IRS are written by the Secretary, by treasury officials they appoint, or by civil service bureaucrats who answer to the Secretary and the President. As long as those regulations don't conflict with an act of Congress, they're the law.

Obama could order the Secretary of the Treasury to write new IRS regulations requiring the IRS to seek and obtain a warrant or other judicial declaration before demanding taxpayer emails from third parties. He could even order this directly: “Executive order 9991: The Internal Revenue Service shall not demand, from third parties, the disclosure of taxpayer emails, except without first obtaining, blah blah blah…” It could be done tomorrow—problem solved.

But the petition doesn't call on Obama or the Treasury department to do this. In fact it doesn't call on them to actually do anything, except to “support” some (unspecified!) “reform”. Does this make sense? If the IRS is doing something you don't like, and you're going to take time to petition the Executive about it, and the IRS is part of the Executive, shouldn't your petition at least include a request that the IRS stop doing the thing you don't like?

So what to think when one sees a petition like this that could so obviously call for an immediate and substantive improvement, and doesn't even do that?

The null hypothesis to explain this is that the guy who wrote up the petition is just some doofus who doesn't know what he's doing. There are a lot of those around. Suppose 17 people wrote up petitions to address this problem; two of them are actually any good; but the one that happened to get viral traction on the Internet is not one of those two.

An alternative hypothesis is the conspiracy theory: the IRS, or the Executive, promoted or even created this particular petition to draw off energy and attention from the two that might actually result in something happening. I'm not sure this is worth considering. As Pierre Simon Laplace said, when asked why he didn't consider such a possibility in a similar situation, “I had no need of that hypothesis.”

A variation on this question asks why Cory Doctorow, who one might suppose would know better, is promoting this petition. Here, the null hypothesis is that Doctorow is also some doofus who doesn't know what he's doing. The conspiracy theory version is somewhat more interesting.

[Other articles in category /politics] permanent link

Sat, 05 Oct 2013

PIGS in SPACE!!!

Today I was at the Pittsburgh Perl

Workshop and I gave a talk because that's my favorite part of a

workshop, getting to give a talk.

The talk is about Moonpig, the billing system that Rik Signes and I wrote in Perl. Actually it's about Moonpig as little as possible because I didn't think the audience would be interested in the details of the billing system. (They are very interesting, and someone who is actually interested in a billing system, or in a case study of a medium-sized software system, would enjoy a three-hour talk about the financial architecture of Moonpig. But I wasn't sure that person would be at the workshop.) Instead the talk is mostly about the interesting technical underpinnings of Moonpig. Here's the description:

Moonpig is an innovative billing and accounting system that Rik Signes and I worked on between 2010 and 2012, totaling about twenty thousand lines of Perl. It was a success, and that is because Rik and I made a number of very smart decisions along the way, many of which weren't obviously smart at the time.I went to sleep too late the night before, slept badly, and woke up at 6:30 and couldn't go back to sleep. I spent an hour wandering around Oakdale looking for a place that served breakfast before 8 AM, and didn't find one. Then I was in a terrible mood. But for this talk, that was just right. I snarled and cursed at all the horrible problems and by the end of the talk I felt pretty good.You don't want to hear about the billing and accounting end of Moonpig, so I will discuss that as little as possible, to establish a context for the clever technical designs we made. The rest of the talk is organized as a series of horrible problems and how we avoided, parried, or mitigated them:

Moonpig, however, does not suck.

- Times and time zones suck

- Floating-point arithmetic sucks

- It sucks to fix your mangled data after an automated process fails

- Testing a yearlong sequence of events sucks

- It sucks to have your automated test accidentally send a bunch of bogus invoices to the customers

- Rounding errors suck

- Relational databases usually suck

- Modeling objects in the RDB really really sucks

- Perl's garbage collection sucks

- OO inheritance sucks

Some of the things I'll talk about will include the design of our web API server and how it played an integral role in the system, our testing strategies, and our idiotically simple (but not simply idiotic) persistent storage solution. An extended digression on our pervasive use of Moose roles will be deferred to the lightning talks session on Sunday.

Much of the design is reusable, and is encapsulated in modules that have been released to CPAN or that are available on GitHub, or both.

Slides are available here. Video may be forthcoming.

Share and enjoy.

[ Addendum 20131217: I wrote up the talk contents in detail and posted them here. ]

[ Addendum 20131217: The video of the talk is available on YouTube, and has been since October, but I forgot to mention it. Unfortunately, the sound quality is poor; I tend to wander around a lot when I talk, and that confuses the microphone. Many thanks to Dan Wright for the video and especially for putting on PPW. ]

[Other articles in category /talk] permanent link

Tue, 24 Sep 2013

In which I revisit the pastimes of my misspent youth

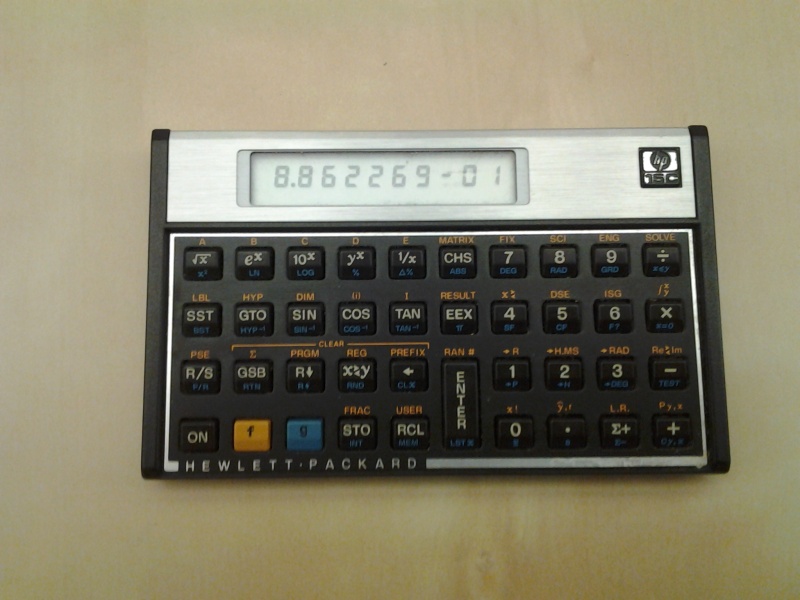

Last weekend I was at a flea market and saw an HP-15C calculator for

$10. The HP-15C was the last pocket calculator I owned, some time

before pocket calculators became ridiculous. It was a really nice

calculator when I got it in 1986, one of my most prized

possessions.

I lost my original one somewhere along the way, and also the spare I had bought from a friend against the day when I lost the original, and I was glad to get another one, even though I didn't have any idea what I was going to do with it. My phone has a perfectly serviceable scientific calculator in it, a very HP-ish one called RealCalc. (It's nice, you should check it out.) The 15C was sufficiently popular that someone actually brought it back a couple of years ago, in a new and improved version, with the same interface but 21st-century technology, and I thought hard about getting one, but decided I couldn't justify spending that much money on something so useless, even if it was charming. Finding a cheap replacement was a delightful surprise.

Then on Friday night I was sitting around thinking about which numbers n are such that !!10n^2+9!! is a perfect square, and I couldn't think of any examples except for 0, 2, and 4. Normally I would just run and ask the computer, which would take about two minutes to write the program and one second to run it. But I was out in the courtyard, it was a really nice evening, my favorite time of the year, the fading light was beautiful, and I wasn't going to squander it by going inside to brute-force some number problem.

But I did have the HP-15C in my pocket, and the HP-15C is programmable, by mid-1980s programmable calculator standards. That is to say, it is just barely programmable, but just barely is all you need to implement linear search for solutions of !!10n^2+9 = m^2!!. So I wrote the program and discovered, to my surprise, that I still remember many of the fussy details of how to program an HP-15C. For example, the SST button single-steps through the listing, in program mode, but single-steps the execution in run mode. And instead of using the special test 5 to see if the x and y registers are equal you might as well subtract them and use the x=0 test; it uses the same amount of program memory and you won't have to flip the calculator over to remember what test 5 is. And the x2 and INT() operations are on the blue shift key.

Here's the program:

001 - 42,21,11 Label A: (subroutine)

002 - 43 11 x²

003 - 1

004 - 0 10

005 - 20 multiply

006 - 9 9

007 - 40 add

008 - 36 enter (dup)

009 - 11 √

010 - 36 enter (dup)

011 - 43 44 x ← int(x)

012 - 30 subtract

013 - 43 20 unless x=0:

014 - 31 STOP

015 - 43 32 return from subroutine

016 - 42,21,12 Label B:

017 - 40 +

018 - 45 0 load register 0

019 - 32 11 call A

020 - 2 2

021 - 44,40, 0 add to register 0

022 - 22 12 goto B

I see now that when I tested !!\sqrt{10n^2+9}!! for

integrality, I did it the wrong way. My method used four steps:

010 - 36 -- enter (dup)

011 - 43 44 -- x ← INT(x)

012 - 30 -- subtract

013 - 43 20 -- unless x=0: …

but it would have been better to just test the fractional part of the

value for zeroness:

42 44 -- x ← FRAC(x)

43 20 -- unless x=0: …

Saving two instructions might not seem like a big deal, but it takes

the calculator a significant amount of time to execute two

instructions. The original program takes 55.2 seconds to find

n=80; with the shorter code, it takes only 49.2 seconds, a 10%

improvement. And when your debugging tool can only display a single

line of numeric operation codes, you really want to keep the program

as simple as you can.Besides, stuff should be done right. That's why it's called "right".

But I kind of wish I had that part of my brain back. Who knows what useful thing I would be able to remember if I wasn't wasting my precious few brain cells remembering that the back-step key ("BST") is on the blue shift, and that "42,21,12" is the code for "subroutine B starts here".

Anyway, the program worked, once I had debugged it, and in short order (by 1986 standards) produced the solutions n=18, 80, 154, which was enough to get my phone to search the OEIS and find the rest of the sequence. The OEIS entry mentioned that the solutions have the generating function

$$\frac{2x^2(1+2x+9x^2+2x^3+x^4)}{1-38x^3+x^6}$$